Update 15/03/24: BEAR Challenge 2024 – registration open!

If you are interested in joining BEAR Challenge 2024 and are either an undergraduate or masters student, from any discipline, please see our Eventbrite registration page for details.

—-

After a two year hiatus, the BEAR Challenge is back! The popular three day event was held on June 21st-23rd and attended by seven teams of 3-5 undergraduate and taught postgraduate students (see below). The teams tackled a heady array of machine learning, deep learning, data engineering, parallelisation, and computer cluster design challenges using the GPU resources available on the EPSRC Tier 2 Baskerville High Performance Computer (HPC).

The challenges were led by both Advanced Research Computing team members and industry experts, read on to find out more about the challenges from the perspective of the helpers/leaders of each one…

Day 1 (AM) Challenge 1 – Getting Started on Baskerville

“From my point of view, it was great to see the months of preparation come to a satisfying and smoothly run event. I did challenge 1 as well as setting up people’s Baskerville accounts. This was pivotal to the success of the event, since setup must run as smoothly as possible. Luckily, we had enough time to get everyone’s account sorted and solve any issues users may have.

When it came to the challenge, the aim was to introduce users to a Jupyter Notebook, setting up a conda environment and completing the Pandas and Pizzas challenge. I feel the best display of this challenge’s success was how smoothly all the other challenges went. It looked to be a fun and educational event for all of the participants and I look forward to contributing to future BEAR challenges.”

Gavin Yearwood

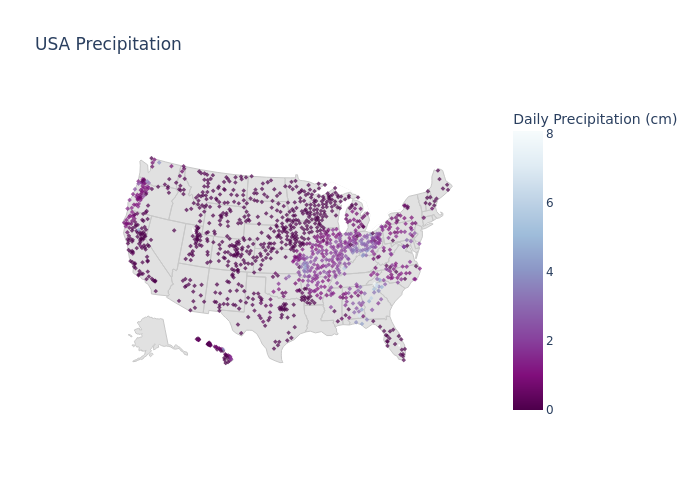

Day 1 (PM) Challenge 2 – Data Engineering with GPUs

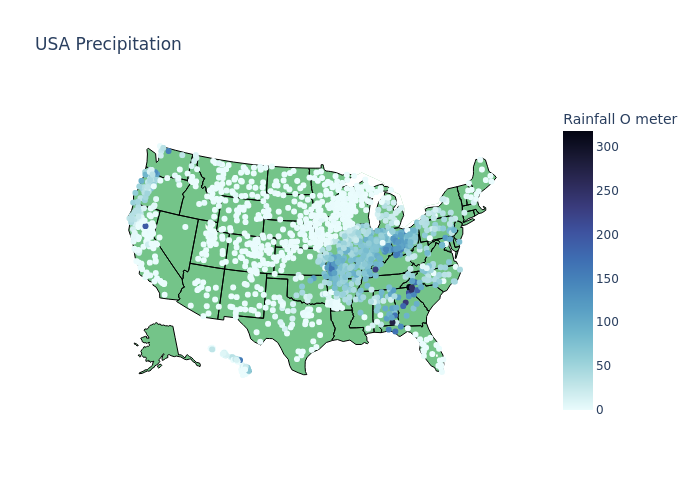

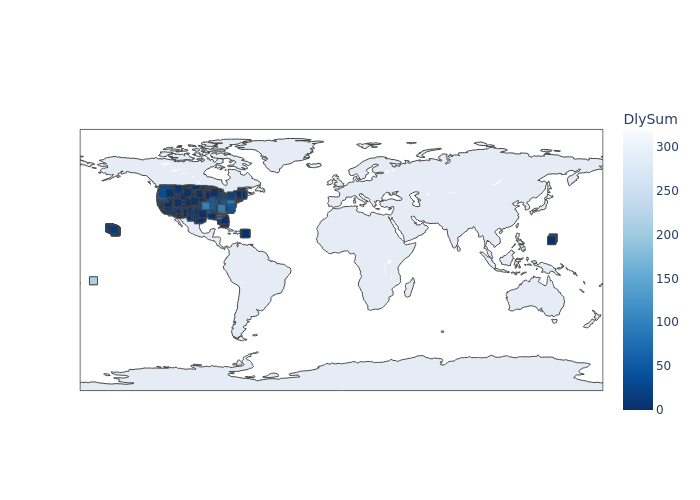

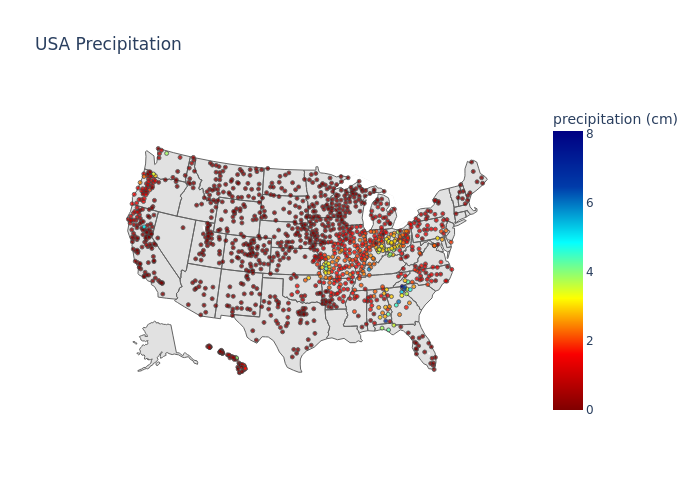

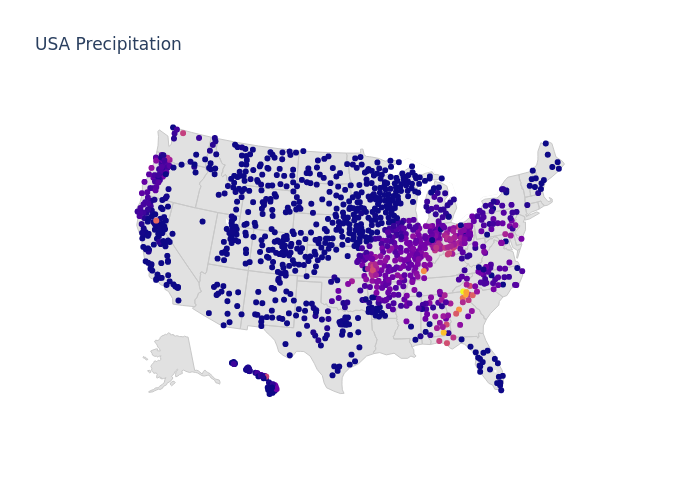

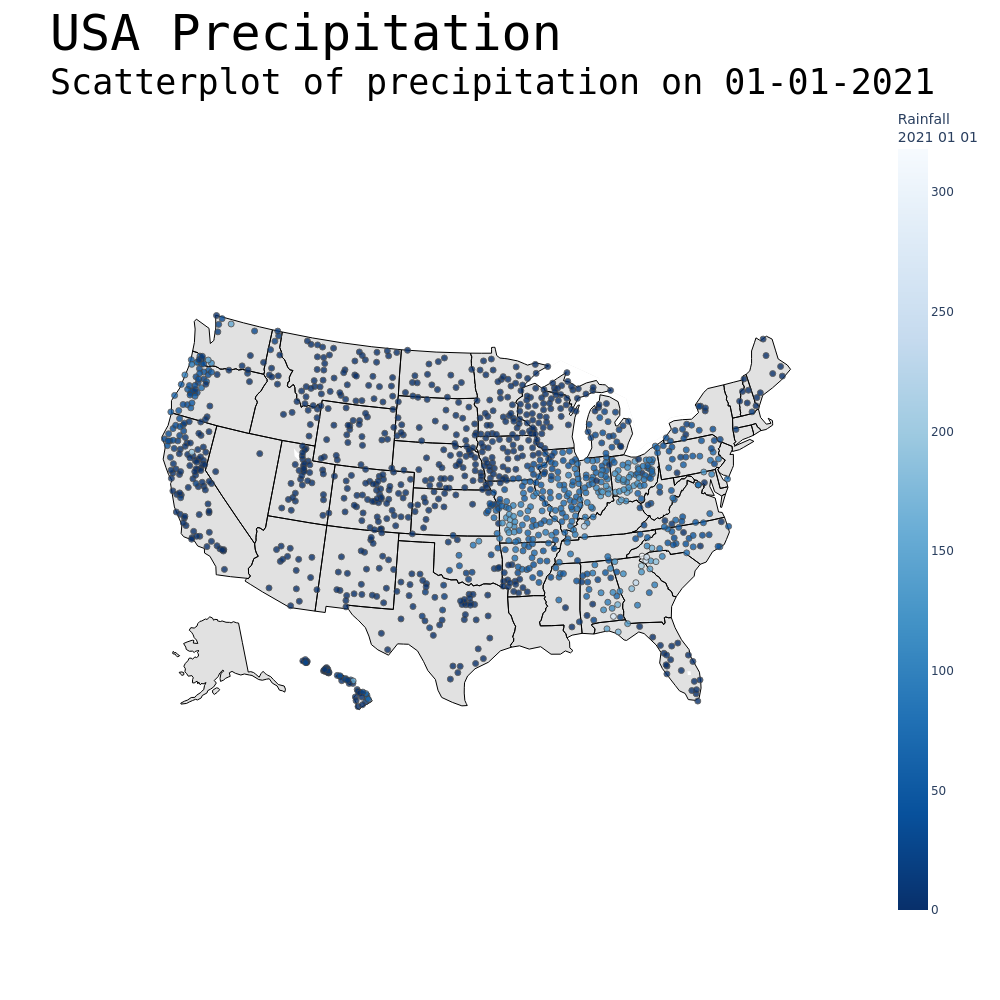

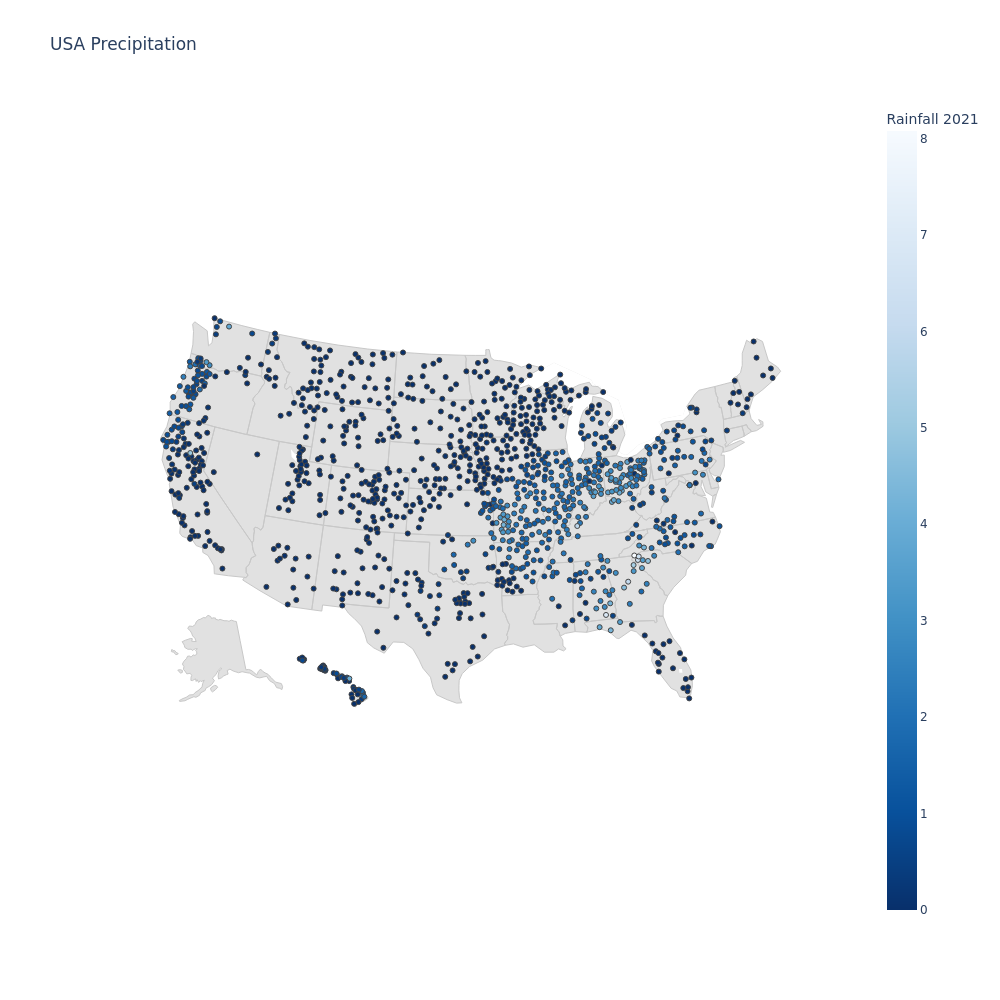

“I designed a challenge centred around the theme of data engineering, where teams produced visualisations of live data downloaded from the US NOAA using a JupyterLab notebook on Baskerville Portal. A gallery of their plots is shown above. I was very impressed by the quality of the work the contestants had produced in a short afternoon, even beating the performance of my own notebook by several seconds using RAPIDS and Dask to accelerate and distribute their work! It was great to see contestants growing as a team over the 3 days, and I enjoyed demystifying HPC for them with a data centre tour and talks from our partners NVIDIA and Lenovo. Also, thanks to OCF for providing the snacks!”

Jenny Wong

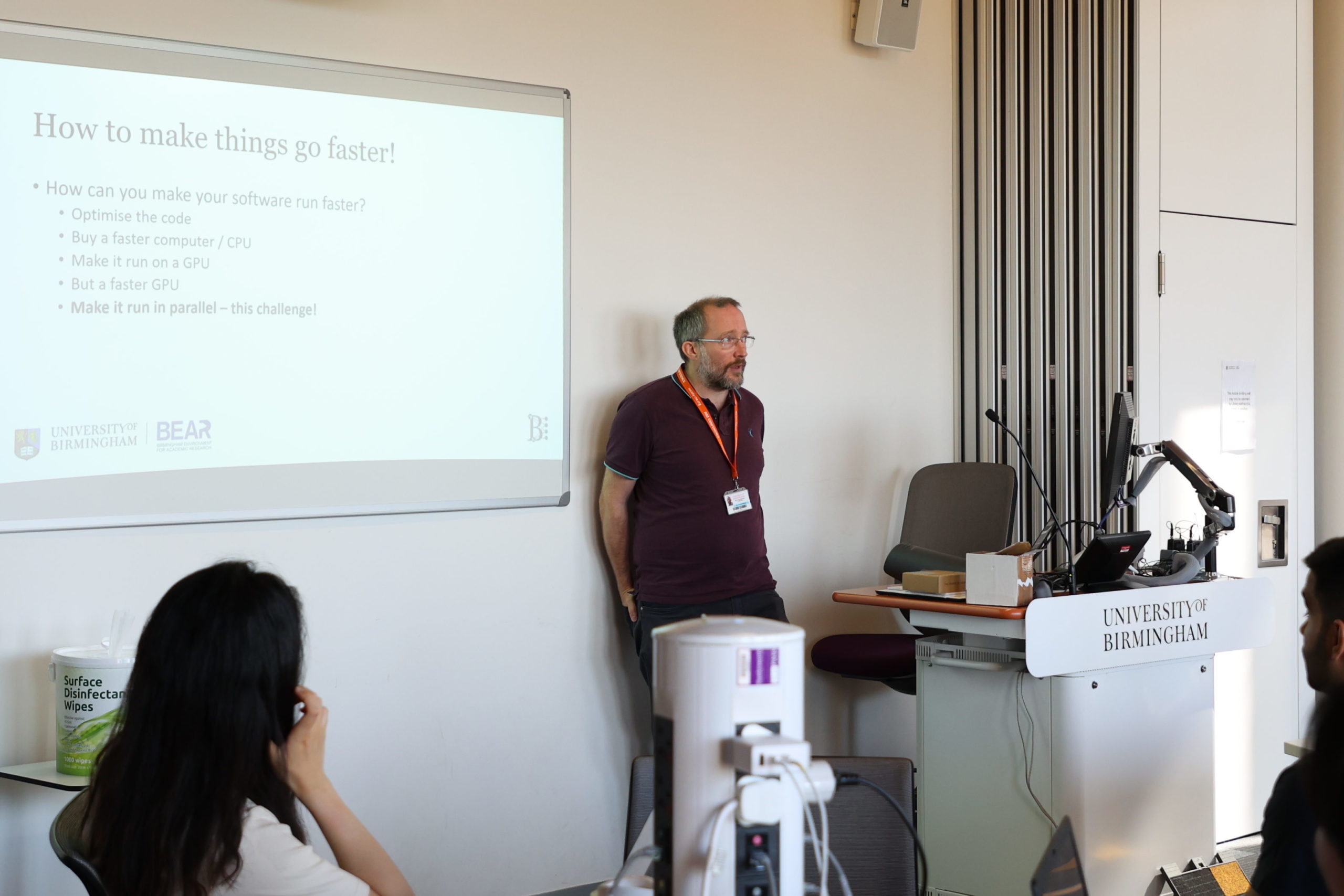

Day 2 (AM) Challenge 3 – Parallelisation

“Challenge 3 explored the question of how to make your software run faster, specifically by making use of multiple CPUs or GPUs simultaneously – parallelisation! The teams were presented with a large matrix multiplication problem, and a python program that could solve it. They were shown how to run it on a CPU core, multiple CPU cores, a single GPU and multiple GPUs. They were then set the challenge of working out how to process the most matrices in the shortest time – using any method they chose. Then 15 minutes before the end of the challenge they were presented with a new dataset of matrices, and points were awarded according to how many correct answers were done in the shortest time.”

Andrew (Ed) Edmondson

Day 2 (PM) Challenge 4 – Deep Learning

“On Wednesday afternoon we were given a presentation by Paul Graham from NVIDIA who talked about his career from being a Computational Physics undergraduate to NVIDIA via the EPCC (formerly Edinburgh Parallel Computing Centre) and his time on the Edinburgh comedy scene. This included some amazing videos from the work being done at NVIDIA, such as building digital twins and enabling robots to learn about a physical environment within a digital space, thus decreasing the learning time by many orders of magnitude.

The afternoon concluded with the fourth challenge of the week; Deep Learning using multiple GPUs. This introduced several HPC concepts participants wouldn’t have seen before, such as the Message Passing Interface (MPI) and Uber’s Horovod library for extending single GPU deep learning frameworks to multiple GPUs. Despite the difficulty of the challenge, all of the groups made significant headway in adapting code to train a neural network on MNIST’s fashion dataset to work on multiple GPUs. Hopefully, this will allow them to make full use of Baskerville’s GPU capabilities over the years to come!”

James Allsopp

Day 3 (AM) Challenge 5 – HPC Systems

“Jim Roche, Technical Computing and HPC Lead at Lenovo kicked off the third and final day by inviting contestants to experience the practicalities involved with designing a HPC cluster. How do you deliver the best computing performance while minimising cost? How do you power the cluster, while ensuring that your cooling system can extract the wasted heat energy? What’s the best way of configuring your spine-leaf switch system to ensure that your network can communicate efficiently?

This challenge added a new dimension to the BEAR Challenge, that helped contestants to develop a more holistic view of HPC from a hardware perspective, which is often an afterthought for end users.

Following the design challenge, Simon Thompson, gave a careers talk about his pathway from his beginnings as a sysadmin at the University of York, to Architecture, Infrastructure and Systems Lead at the University of Birmingham, to his current role as HPC Solutions Manager at Lenovo. His talk emphasised the importance of managing a good relationship with vendors, which allowed for a deeper partnership with Lenovo to nurture an innovative, cutting-edge design of the Baskerville HPC system.”

Jenny Wong

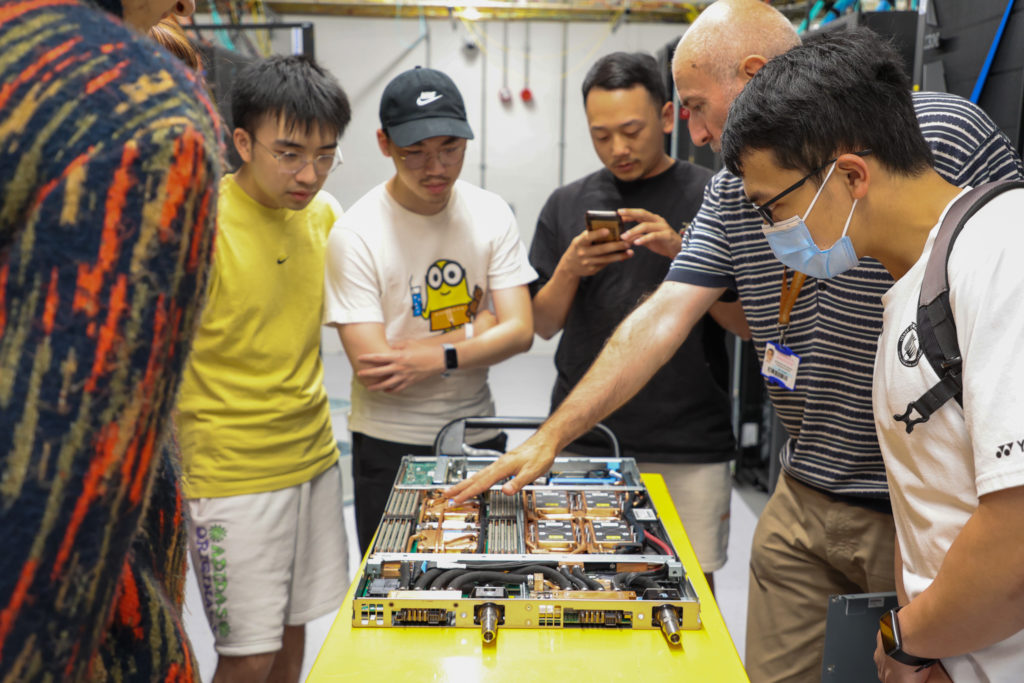

Day 3 (PM) – Data Centre Tour

To finish off the whole event, the teams of students were treated to a rare tour of our Research Data Centre…

“It is hard to tell what a supercomputer or even storage looks like, we all understand our devices, laptops and smartphones but a data centre, well that’s on another level.

We took the teams on a tour of the data centre, which is hidden away (in plain sight) on Edgbaston Park Road. We were shown how the water cooling worked, and the 11,000 volt generator that sat next to the data centre, so it would come on if we ever had issues with the main electricity supply.

Jon Hunt from the Architecture, Infrastructure and Systems team showed us the internal working of the data centre, the setup room, working room and then the main data centre room. He warned us about how hot it was going to be and how noisy and boy was he right. Due to the data centre being water cooled [Editor: rather than air-cooled], the heat from the servers and other devices was immense and the noise was deafening, we were hardly able to talk to each other, which limits the amount of time you can work in this environment.

The students, as was I, were amazed to see the many racks and Jon showed us a server (node) that was pulled out from the racks, and how water is fed through the many copper pipes around the mother board, CPU and RAM. The huge number of these systems collectively are BlueBEAR and Baskerville, the latter which was used to run the BEAR challenge on. The look on the students faces to see this data centre was amazing to see, as you could see how much work is required to get these systems up and running and keeping them stable.

Finally Jon took the students into the plant room where he showed the humongous number of pipes that feed the data centre with water for cooling.”

Aslam Ghumra

Thanks to…

The BEAR Challenge was a huge team effort and would not have been possible without support from our industry partners NVIDIA and Lenovo providing speakers and challenges for our teams, as well as Lenovo supplying our winner’s prizes of tablets! Thanks also to OCF for supplying vast amounts of food and drink to keep our students and supporting staff full of energy 🙂 Lastly, thanks to the students for taking part and fully engaging with all the challenges.

And the winner is….

To find out who won the BEAR Challenge 2022, read our winner’s blog post for a follow up interview with the winning team.