In this case study, we hear from Dr Jian Zhong (Geography, Earth and Environmental Science), who has been making use of BlueBEAR to enable his research in air quality modelling.

I am a Research Fellow in Urban Air Quality Modelling in the Geography, Earth & Environmental Science. My research has focused on urban air quality modelling, urban boundary layer meteorology, computational fluid dynamics, large-eddy simulation of turbulence, street canyon flow, fast photochemistry modelling, and geographical data processing.

I have made unique scientific contributions and by developing and applying a range of state-of-the-art atmospheric models, I have improved my understanding of atmospheric processes and mechanisms. My research outputs have also contributed to BBC One Panorama, UK Fluid Network Gallery, Chief Medical Officer’s Annual Report, Exchange Exhibitions and Science Futures at Glastonbury Festival.

“For this air quality modelling application of task farming, the optimisation process has reduced weeks of model execution time to approximately 35 hours“

What is your research about?

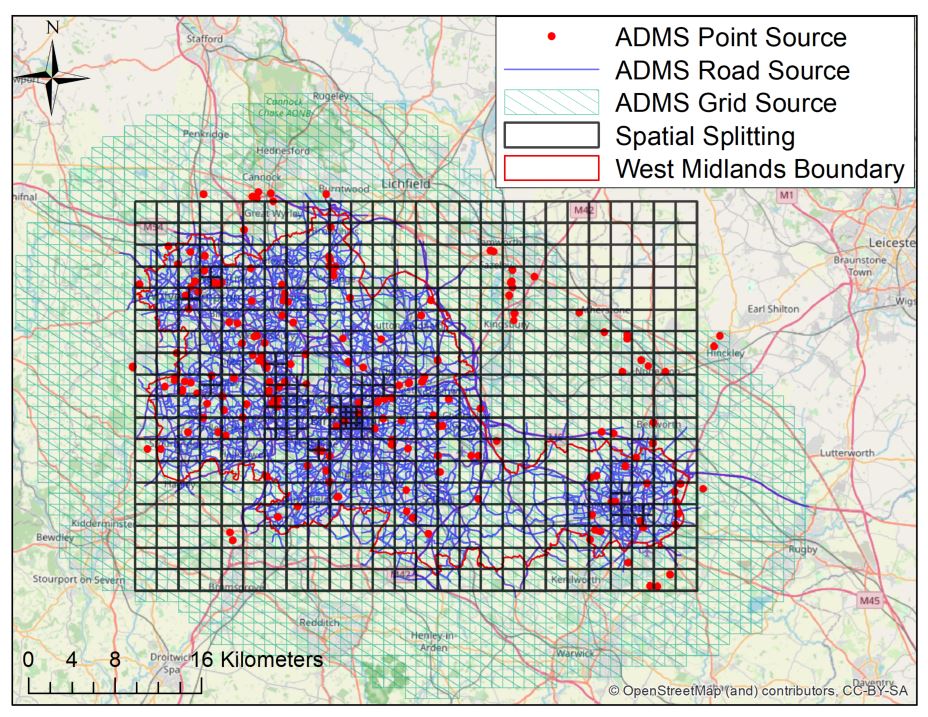

Air pollution has become the biggest environmental risk for public health. The mortality burden associated with ambient air pollution is about 28–36,000 per year in the UK. It is important to better understand the sources and processes of air pollutants. High-resolution air quality models combining emissions, chemical processes, dispersion and dynamical treatments are necessary to develop effective policies for clean air in urban environments. Air quality modelling is an important tool for the investigation of air quality (here using West Midlands as a case study) and for the assessment of the impact of specific intervention scenarios on air quality within the region.

Why was BlueBEAR chosen?

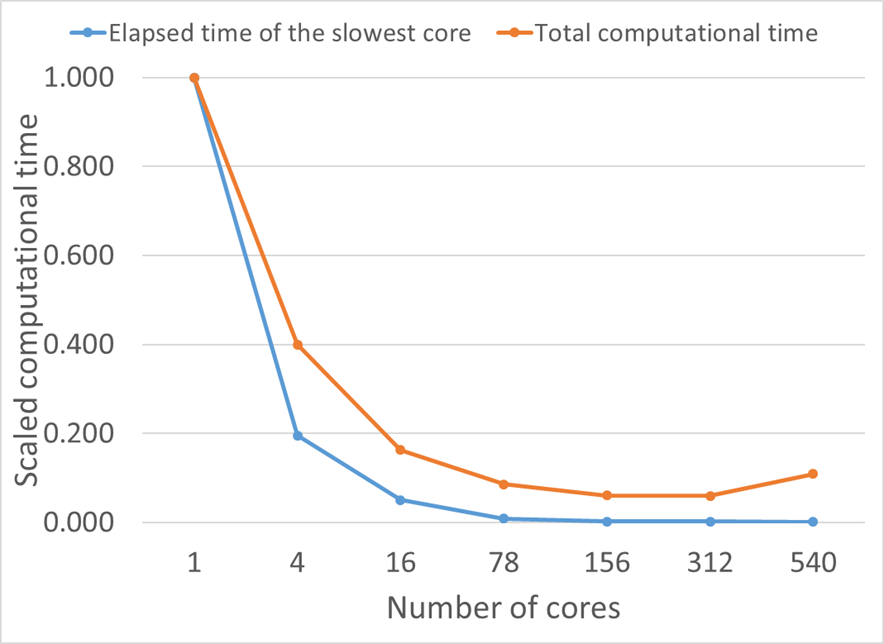

I demonstrated the application of task farming to reduce runtime for ADMS-Urban, a quasi-Gaussian plume air dispersion model, using BlueBEAR. The model represents the full range of source types (point, road and grid sources) occurring in an urban area at high resolution. The task farming approach was achieved by spatially splitting the computational domain. I implemented and evaluated the option to automatically split up a large model domain into smaller sub-regions, each of which can then be executed concurrently on BlueBEAR.

How has BlueBEAR been useful?

An array job with 540 cores, each for a single sub-domain based on task farming, was submitted to BlueBEAR using the Linux version of the ADMS-Urban model. The overall elapsed time for the run for the typical whole year 2016 baseline case is about 35 h. For this air quality modelling application of task farming, the optimisation process has reduced weeks of model execution time to approximately 35 h for a single model configuration of annual calculations.

We were so pleased to hear of how Jian is able to make use of what is on offer from Advanced Research Computing, particularly to hear of how he has made use of multiple cores on BlueBEAR HPC– if you have any examples of how it has helped your research then do get in contact with us at bearinfo@contacts.bham.ac.uk. We are always looking for good examples of use of High Performance Computing to nominate for HPC Wire Awards – see our recent winners for more details.