Danielle Mason, from the What Works Centre for Local Economic Growth, discusses the importance of logic models when applying for local economic growth funding and highlights a new training programme to help support their use.

At What Works Growth, we want to improve the use of evaluation and evidence for local economic growth policy.

The Green Book process can help to ensure that policy is evidence-based and informed by robust estimates of likely impact. These impacts should be derived from previous evaluations of what has worked and what hasn’t, whenever possible. That’s why What Works Growth joined the Green Book Network and why we champion its efforts to support robust and practical use of the Green Book and the business case process for appraising government-funded projects.

Investing in Local Economic Growth

There are some points of controversy when it comes to using the Green Book to make decisions about investments in local economic growth. For example, there has been concern that the cost-benefit ratio calculations required by the Green Book can favour investment in some places rather than others. This was a key driver of the 2020 review of the Green Book (the findings and the Government’s response can be found here). It is also an issue that we have written about before, but in this blog, I want to discuss other, more practical barriers to ensuring that the Green Book process can support good decision-making for local economic growth.

High-quality impact evaluation

First, local growth interventions, particularly those designed and delivered locally, rather than as part of a centrally managed programme, are rarely the subject of the type of high-quality impact evaluation that we champion at What Works Growth. That is, the type that uses a comparison to estimate what would have happened without the intervention, and therefore gives us reliable information on the difference that the intervention made. This means we’re lacking reliable evidence when, as part of the Green Book process for new projects, we attempt to estimate what the impact of new transport, regeneration, or business interventions will be, based on past experience. The result is that the comparisons of likely costs and benefits that are included in business cases are not as robust as they could be.

To help to address this, we run regular free training for local government, on the benefits of doing impact evaluation and how to successfully commission a high-quality evaluation. More locally-commissioned impact evaluations will mean more evidence is available to support better cost-benefit analysis in future.

Making use of logic models

In recent years, with more local growth funding taking the form of pots which areas bid into, another practical question has become increasingly relevant for local officials. As part of the business case development for potential projects, officials are often required to present the rationale for an intervention in the form of an evidence-based ‘Logical Change Process’, to use the Green Book terminology. Our users have highlighted this as an area where they feel they need more support, and so this year we have introduced a new free training course: Making use of logic models.

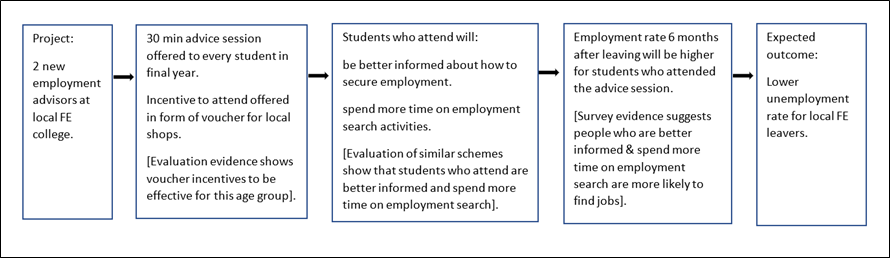

A ‘logic model’ is a way of illustrating the causal linkages between each step in a process, in this case, the process by which a project will deliver its expected outcomes. Good policy design requires those developing a project to consider carefully each link in the causal chain and assess whether it is evidence-based. If there is no evidence to suggest that one step will lead to another, the project might not deliver the expected outcomes. Ideally, each step would be based on robust evaluation evidence, but other types of evidence, such as economic theory, can also be used.

A simple fictional illustration is provided below. The intervention involves employment advisors at local Further Education (FE) college. A 30-minute advice session by an advisor will be offered to every student in their final year. Students will be offered voucher incentives to attend, because the college has evidence from a previous project that these are a good way to increase attendance for non-compulsory sessions. Evaluation of similar schemes has shown that students who get advice are, all else being equal, better informed and spend more time on employment search than those who don’t, so the college expects that they will see this effect for students who attend. Finally, they draw on survey evidence which shows that more employment search activity increases the chances of young people finding a job, so the next chain in the logic model is that the students who attend will be more likely to find jobs than they otherwise would have been. This should result in a lower unemployment rate for local FE leavers.

We hope that our logic model training will help officials who are often facing tight deadlines to deliver business cases for local growth projects. But logic models are not only important for developing good business cases and securing funding. Getting more places to focus on the causal links between the projects they are funding and the local economic outcomes they want should also help to improve policy design and reduce the risk that projects fail to deliver positive improvements. A robust logic model will also aid high-quality evaluation, which can then be fed back into future policy design. All of this should help us to achieve our goal of more evidence-based policy-making in local growth.

View details on the What Works Growth’s logic model training sessions.

This blog was written by Danielle Mason, Head of Policy, What Works Centre for Local Economic Growth.

Disclaimer:

The views expressed in this analysis post are those of the authors and not necessarily those of City-REDI / WMREDI or the University of Birmingham.